The Predictability Paradox: Why I Felt Like an Agile Fraud

I’m teaching "Predictable Delivery" soon, but my own Jira board is a disaster. Here’s why predictability is impossible in a system in flux—and why that’s okay.

In two months, I’m walking onto a stage in Vancouver to teach "Achieving Predictable Delivery" at the Global Scrum Gathering. I just looked at my own team’s Jira board. It’s a disaster. If I’m the expert, why does my data look like a random number generator?

I was staring at a burndown chart that looked like a jagged mountain range while polishing my slides. The contrast was embarrassing. My stomach dropped. I’m supposed to stand up and teach people how to forecast work and build trust with stakeholders. Meanwhile? My own delivery metrics are a mess.

I feel like a fraud.

We carry this mess on our shoulders. We internalize the chaos like it’s a personal character flaw. We think if we just help the team more, run a tighter daily scrum, or refine the backlog a little more thoroughly, we can manufacture certainty out of thin air. We convince ourselves that poor predictability is a personal failure of our coaching ability.

It isn't. Predictability is a mathematical impossibility when a system is in flux. Looking at my screen today, I have to admit a hard truth.

I didn't just inherit that chaotic system. I caused it.

The Mathematics of My Own Mistakes

Looking back, I can trace the exact decisions that destroyed our data.

I had a team struggling with focus. They were getting pulled in multiple directions during our standard two-week sprints. I thought I could fix a systemic distraction problem with a process tweak. I moved the team to one-week sprints. I thought the shorter horizon would force a strict "Definition of Done" discipline. I figured we’d finally stop carrying stories over if the wall was only five days away.

I was wrong. The overhead of the events ate our capacity alive. The distractions just felt more painful because the clock was ticking faster. So, a few sprints later, I moved them back to two-week sprints. (Pro tip: more meetings rarely solve a focus problem).

Shortly after that, project leadership asked to shift our start and end dates to align with stakeholder availability. I said yes. I thought I was being a good partner. I thought I was being adaptable.

I was destroying our ability to answer the most important question in software: "When will it be done?" I didn't just bend the system. I broke it.

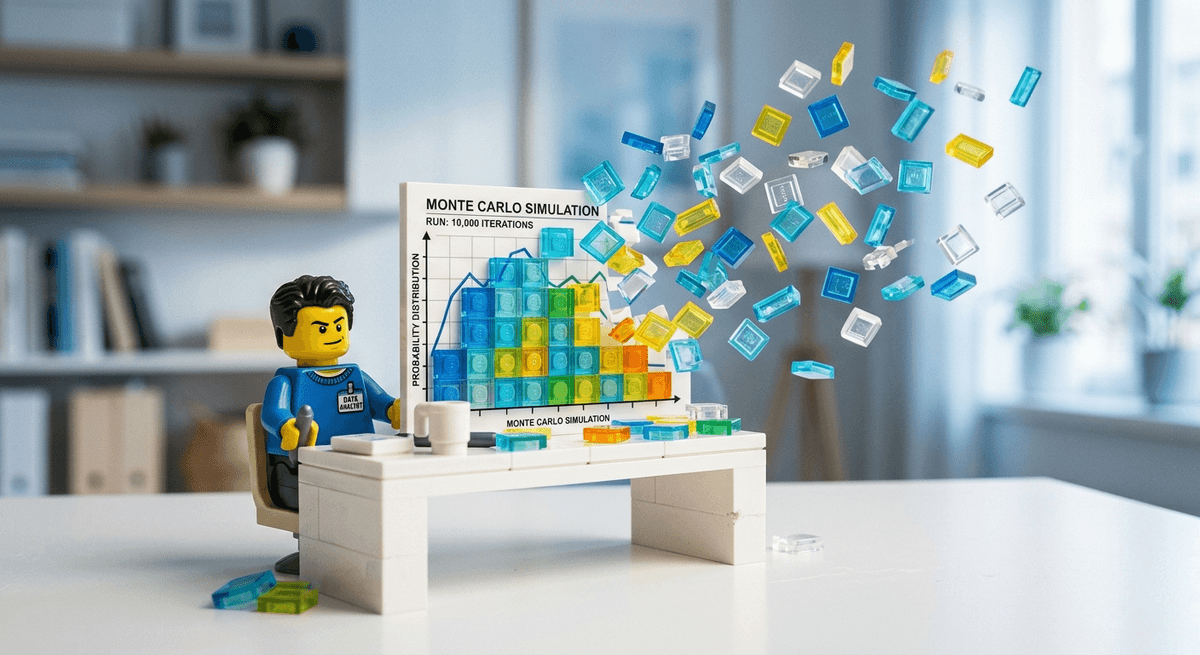

Here’s the cold, hard math of forecasting. If you want to know when a team will finish, you need to start thinking in bets—probabilistic forecasts, not deterministic guesses. You need historical data. You take that data and run a Monte Carlo simulation. That gives you a confidence interval. It tells you that you have an 85 percent chance of finishing the scope by a specific date.

But these simulations have a fatal sensitivity. They require stable inputs.

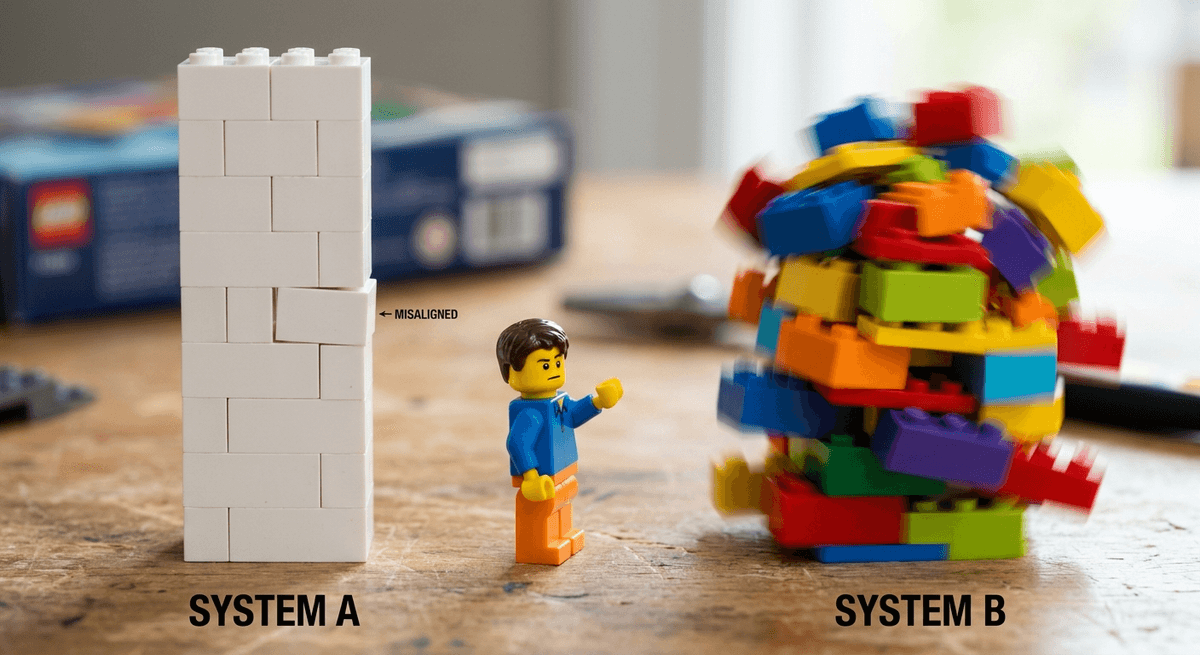

Change the input variables—the team size, the sprint boundaries, the domain context—and your historical data is instantly void. You cannot mathematically predict the future of System B using the historical data of System A. When I changed our sprint lengths and boundaries three times in a single quarter, I wiped our historical baseline clean.

It is for this reason that I treat system stability as a prerequisite for any conversation about deadlines. I was teaching predictability, but I was operating a system that was mathematically incapable of being predicted.

The Cost of Playing Musical Chairs

My cadence changes were bad enough. But what happens when leadership decides to mess with the humans on the team?

Ever faced a situation where leadership wants to make a big move right when things are critical? I was chatting with another Scrum Master recently about a team they support. Leadership was about to move their lead developer to another project. The current team was 75 percent through a twelve-week project. They were slightly behind schedule and facing a tight deadline.

The organizational plan was simple: Replace the lead developer with a developer from a different team.

Management thinks this is a simple capacity swap. They think people are interchangeable Lego bricks. They assume that if you trade one senior engineer for another, the velocity stays the same.

Dynamic reteaming is the reality in modern SaaS—I get it. But the mathematical cost doesn't care about your reality. According to Mountain Goat Software, losing a team member or swapping a key player drops velocity by 17 to 50 percent. It is never a one-to-one swap. The interdependent knowledge network of the team is severed and has to be rebuilt.

It gets worse. When you force people to switch contexts or split their focus, the penalty is massive. Analysis of 224,000 pull requests by Haystack revealed a terrifying metric. Context-switched work takes 223 percent longer to complete.

Two hundred and twenty-three percent. For work of the exact same complexity.

This applies to the entire delivery flow you are trying to manage. You cannot estimate your way out of a 223 percent cycle time penalty.

The Illusion of Fake Predictability

So how do teams survive this? How do organizations constantly reorganize, swap people around, and still manage to hit their arbitrary release dates?

They lie.

When a system is unstable, true predictability is a myth. If the dates are still being met, the team is engaging in "fake predictability." I’ve seen this happen in almost every enterprise I’ve worked with. Teams forced into unstable environments will naturally resort to survival mechanisms like "weekend heroism" or cutting corners on the Definition of Done.

If you listen to Dan Vacanti and Prateek Singh on Drunk Agile, they’ll tell you that your data is only as good as your system's stability. If the system is vibrating like a tuning fork, your forecast is just a guess with a fancy name.

The math is simple. When your system gets stable and your units of work get small enough, the cone of predictability narrows. You don't need to be a psychic. You just need a system that isn't being constantly disrupted.

This is why I hate story point obsessions. They’re a red flag for a system that’s already broken. We are trying to manufacture certainty for stakeholders who demand a date, but we are doing it by ignoring the reality of the system we’ve built. If you don't have stable teams, you don't have a predictable system. Period.

Stop Manufacturing Certainty

I felt like a fraud because I was trying to give my stakeholders what they wanted: a guaranteed date in an un-guaranteed environment. I thought my job was to make the metrics look "predictable" even when the organizational reality was anything but.

The breakthrough for me was realizing that my value isn't in creating a perfect burndown chart. My value is in providing the transparency that allows the business to make actual decisions.

If the system is unstable, the most "Agile" thing you can do is expose that instability, not hide it behind padded estimates. We have to stop being the "protectors" of the schedule and start being the "narrators" of the reality.

If you are a Scrum Master or delivery lead feeling like a fraud today because your metrics are a mess, try this Monday, y'all:

1. Stop protecting the metrics. If leadership changes the team composition or the sprint cadence, let the data reflect the chaos. Don't ask the team to "work harder" to keep the velocity steady. If velocity drops from 30 points to 12 because you lost a lead dev, show that drop. Data is that third person in the conversation who doesn't have an ulterior motive. Let it speak.

2. Show the system to itself. Visualize the hidden costs of instability. Use the data to highlight the spike in hours of effort and the erosion of stability that occurs whenever the flow is disrupted. Provide the script: "We can move Sarah to the other project, but we need to account for the systemic cost. Our data shows that this kind of shift typically destabilizes throughput and increases the effort required for remaining tasks. If we prioritize this move, we should adjust our expectations for the current delivery date to reflect that reality."

3. Forecast based on today, not a dream. If your system is in flux, stop looking at your "best ever" sprint as the baseline. Use your last 2-3 sprints of actual throughput. If it’s erratic, your forecast should be a wide range, not a single date. It is better to be vaguely right than precisely wrong.

Overall, I’m still going to Vancouver. I’m not changing my talk, but I’m adding a new case study to the mix—this one. I’m going to teach them how to handle the fraud of certainty.

You cannot force predictability onto an unstable system. You can only measure the flow of the system you actually have.

Stop fighting for perfect metrics. Start fighting for the truth.

Now go break something.

Continue Your Journey

Monte Carlo Forecasting Calculator: Stop guessing with story points and start using your actual historical data to generate probabilistic forecasts your stakeholders can trust.

Free Resources Hub: Access all of my templates, guides, and tools for navigating unpredictable delivery environments.

Meta Description: Why predictability is a mathematical impossibility in a system in flux, and how to handle the "Agile fraud" feeling when your metrics don't match your workshop slides.